In December 2025, a software engineer in Mexico finished his workday early enough to pick up his children from school. A lawyer in India sat down with an AI tutor and, for the first time in her life, read Shakespeare without flinching.

A soldier in Ukraine, sleepless under shelling, opened a chat window and began teaching himself something new, anything new, because learning was the only thing that kept the panic at bay.

None of these people knew each other. But all of them, during the same week, sat for an open-ended conversation with an AI interviewer built by Anthropic, the company behind Claude.

They were asked a deceptively simple set of questions: what do you want from AI, has it delivered, and what scares you? Over seven days, 80,508 people across 159 countries and 70 languages answered. The result, published in March 2026, is what Anthropic describes as the largest and most multilingual qualitative study ever conducted.

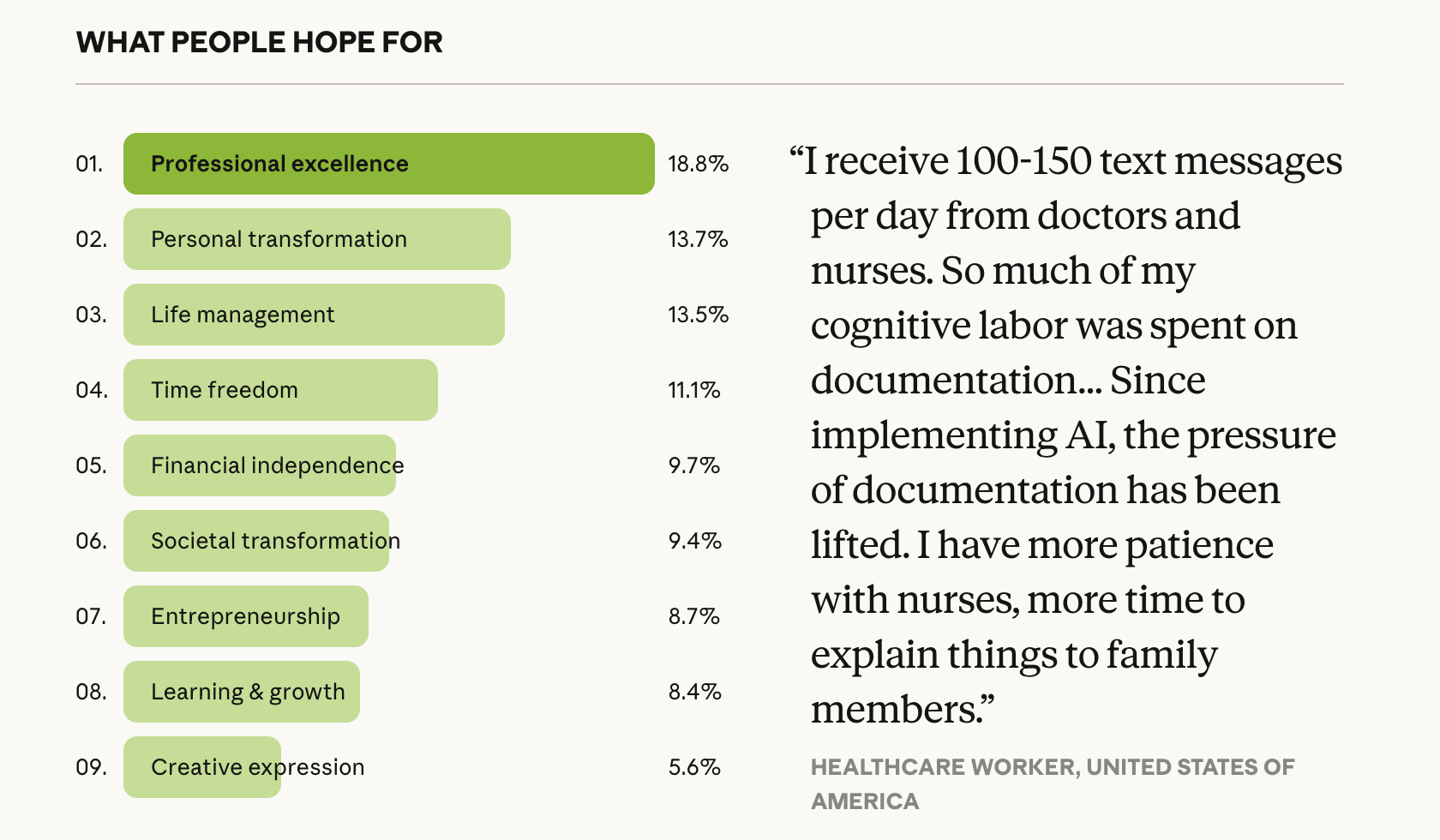

What respondents most wanted from AI, classified by Claude from their open-ended answers to “If you could wave a magic wand, what would AI do for you?” 1% of respondents did not articulate a vision. Credit: Anthropic

That claim is plausible. The previous benchmarks were the USC Shoah Foundation's Visual History Archive, with roughly 52,000 to 59,000 genocide testimonies across 40 languages, and the World Bank's “Voices of the Poor” project, synthesising the experiences of over 60,000 people in 60 countries.

Anthropic's study exceeds both in headcount, though the comparison should be handled with care: the depth and stakes of those earlier projects were of a different order.

What makes the Anthropic study worth reading closely, though, is not the scale. It is what the scale reveals. When you ask 81,000 people an open question about their hopes for a technology, and the most common answer is not “make me more productive” but something closer to “give me my life back,” you are no longer looking at a product survey. You are looking at a census of longing.

The arithmetic of wanting

Anthropic Interviewer, a version of Claude prompted to conduct adaptive conversations, asked each participant a fixed set of questions and then followed the thread wherever it led. Claude-powered classifiers then categorised every response.

The approach bridges the old tradeoff in social science between depth and breadth, at a speed that would have been impossible even two years ago.

The headline numbers are instructive but not quite where the story lives. Roughly 19% of respondents wanted AI for “professional excellence,” the largest single category. Another 14% sought “personal transformation” through emotional growth or health support. Some 11% wanted more time for family and leisure. Nearly 10% wanted financial independence.

These figures, neatly sorted, can look like a product roadmap. They are not.

What the interviewers found, again and again, was that the surface-level answer concealed a deeper one. People began by talking about automating emails or speeding up code, but when pushed on what that would actually enable, they said things like: I want to cook with my mother instead of finishing tasks.

The productivity framing was a vocabulary people had borrowed from the technology itself. The aspiration underneath was older and more human: relief from the cognitive overhead of modern life.

This pattern is familiar to anyone who has done qualitative research. The difference is the confidence you can place in it when it shows up across tens of thousands of conversations, in 70 languages, from Lagos to Lyon.

The stories no survey could capture

Surveys tell you what people tick. Interviews tell you why they hesitate before ticking. The richest material in Anthropic's report is not the category breakdowns but the quotes, which read less like customer feedback and more like fragments of memoir.

A butcher in Chile who had barely touched a computer before AI described venturing into entrepreneurship and finding motivation he never expected.

A homeless healthcare worker in the United States used AI to brainstorm a digital marketing business and, for the first time, saw a path to a house. A physician in Israel, suffering from a neurological condition that local specialists could not diagnose, used AI to find two scientific studies that led to effective treatment. A mute worker in Ukraine built a text-to-speech bot with Claude that let him communicate with friends in near-real time.

These are not stories about productivity. They are stories about access. And they cluster around a set of AI affordances that have nothing to do with speed: patience, availability, and the absence of judgement.

A student in India explained that his professor teaches 60 people and will not entertain many questions, but AI lets him ask anything at 2am. A lawyer in the same country described overcoming a lifelong maths phobia and realising she was not as unintelligent as she had once believed.

Eighty-one per cent of respondents said AI had already taken a concrete step towards their stated vision. That is a remarkably high fulfilment rate for a technology whose critics describe it as overhyped, and it suggests the gap between what people want from AI and what it delivers is narrower than the discourse implies.

The same hand that gives

The study's most intellectually honest contribution is what it calls “light and shade”: five recurring tensions in which the same AI capability that produces a benefit also generates a harm. People who valued AI for learning were three times more likely to also worry about cognitive atrophy.

Those who found emotional support in AI conversations were three times more likely to fear becoming dependent. The duality was not between optimists and pessimists. It lived inside the same person.

A graduate student in the United States confessed to telling Claude things she could not tell her partner, and wondered whether she was having an emotional affair. A South Korean student admitted to getting excellent grades by memorising AI's answers rather than learning the material, and described that as the moment of deepest self-reproach.

These tensions do not cancel out the optimism. They deepen it. And across most of the five tensions, the study found an asymmetry: benefits were grounded in lived experience, while harms leaned hypothetical.

The exception was reliability, where 79% of those concerned had encountered it directly. Anyone who has watched a confident chatbot hallucinate a citation will recognise that statistic.

A geography of aspiration

The regional data is where the study shifts from interesting to genuinely useful. AI sentiment was majority-positive everywhere, no country dipping below 60%, but the texture varied. In Sub-Saharan Africa, Central Asia, and South Asia, respondents were least likely to voice concerns and most likely to frame AI as an equaliser.

An entrepreneur in Cameroon described reaching professional competence in cybersecurity, UX design, marketing, and project management simultaneously, because in a tech-disadvantaged country, he could not afford many failures. An entrepreneur in Uganda explained that without being based in the US or UK, AI may be his only way to compete.

In Western Europe and North America, the mood was more guarded, with stronger concerns about governance, surveillance, and jobs. Concern about jobs and the economy, the strongest single predictor of overall AI sentiment, tracked closely with wealth. The pattern makes intuitive sense.

If you have a job worth protecting, AI looks like a threat. If you have never had fair access to the tools that might build one, AI looks like a door.

The mirror, and its distortions

The study has real limitations. These were active Claude users, not a representative sample. The interview asked about positive visions first. The interviewer was itself an AI product made by the company running the study. Anthropic acknowledges most of this.

What it does not dwell on is that the study's real contribution is methodological as much as substantive. Using AI to conduct qualitative interviews at a scale that was previously impossible, and then using AI to classify the results, is a genuinely new form of social science.

Cloud Research's Engage platform has been doing something similar for market research, and the approach is spreading. If it holds up under scrutiny, the implications extend beyond tech companies.

Imagine a government using AI interviewers to understand what citizens need from public services, in their own words, at the scale of a national census.

That future is closer than it sounds. And it arrives carrying the same duality the study itself describes: immense promise tangled with real risk, a technology that gives people voice and also, inevitably, shapes what they say.

But duality is not paralysis. The 81,000 people who spoke to Anthropic's interviewer were not frozen between hope and fear. They were navigating both, the way anyone does when something important is changing fast. And the thing they wanted most, the thread that ran through every region and every language, was not a feature. It was time: time to think, time to rest, time to be present with the people they love.

If there is a lesson in 81,000 open-ended conversations about artificial intelligence, it may be this: we are not as interested in the machine as we are in what the machine might give back to us.

And what we want back turns out to be startlingly, movingly simple.