In short: Stanford's 2026 AI Index Report finds the performance gap between the best American and Chinese AI models has collapsed to 2.7%, down from 17.5-31.6 percentage points in May 2023, despite the US spending 23 times more on private AI investment ($285.9 billion vs $12.4 billion). China leads in AI patents (69.7% of global filings), publications (23.2% of global output), industrial robot installations (9x the US rate), and energy infrastructure, while AI talent migration to the US has dropped 89% since 2017.

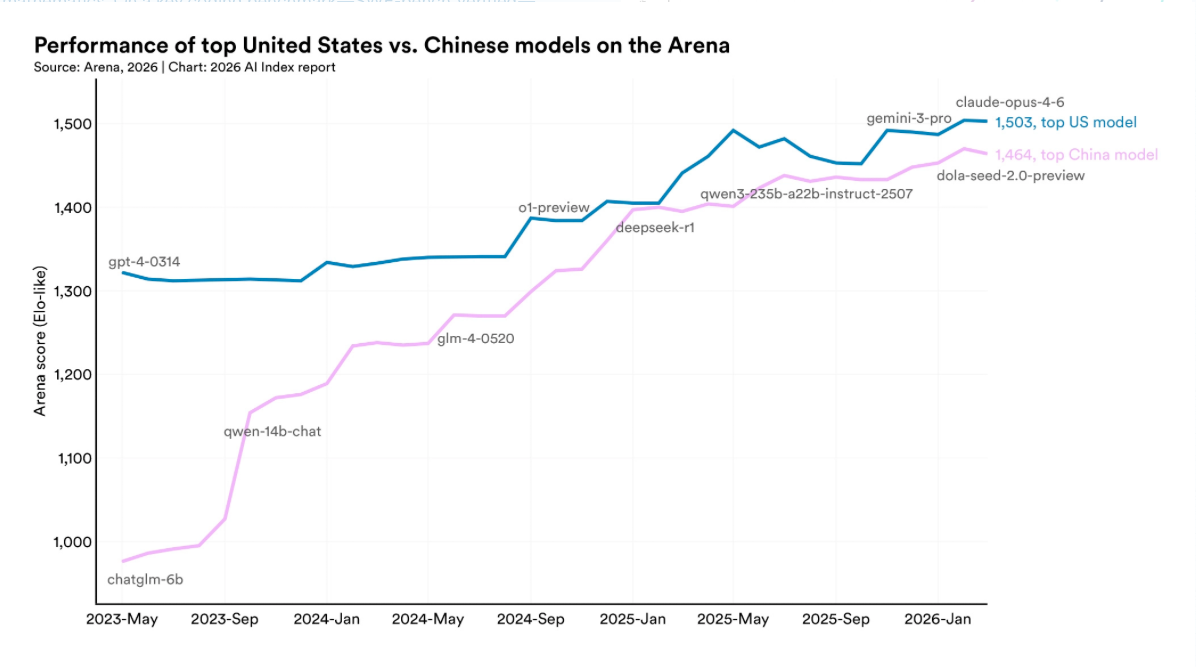

The performance gap between the best American and Chinese AI models has collapsed to 2.7%, according to the 2026 AI Index Report published this week by Stanford University's Institute for Human-Centered Artificial Intelligence. In May 2023, the gap was between 17.5 and 31.6 percentage points across major benchmarks. As of March 2026, Anthropic's Claude Opus 4.6 leads the global leaderboard with an Arena score of 1,503, while ByteDance's Dola-Seed-2.0-Preview sits at 1,464, a difference of 39 points. DeepSeek's R1 reasoning model briefly matched the top US model in February 2025, and American and Chinese models have traded the lead multiple times since.

The 423-page report, the most comprehensive annual assessment of the global AI landscape, documents a situation in which the United States spends 23 times more on private AI investment than China but leads on the only metric that arguably matters, model performance, by less than three percentage points. The question the report raises without quite answering is whether that spending advantage is sustaining American leadership or whether China has found a way to compete without it.

Where each country leads

The United States dominates private AI investment, with $285.9 billion in 2025 compared with China's $12.4 billion. California alone accounted for $218 billion, more than 75% of the US total. American companies produced 50 notable AI models last year, compared with China's 30, though China's count doubled from 15 the previous year while America's grew more modestly. The US hosts 5,427 data centres, more than ten times any other country.

China leads in volume. Chinese researchers produced 23.2% of all global AI publications and 20.6% of citations, compared with 12.6% for the US. Chinese entities filed 69.7% of all AI patents worldwide. China installed 295,000 industrial robots in the most recent reporting period, nearly nine times the 34,200 installed in the United States. And China's electricity reserve margin has never dipped below 80%, twice the necessary capacity, while the US power grid suffers from decades of underinvestment that the report identifies as a potential bottleneck for AI infrastructure growth.

The investment figures come with a significant caveat. The report notes that private investment data “likely understates” China's actual AI spending because the Chinese government channels resources through guidance funds and state-initiated investment vehicles that do not appear in private capital databases. The 23-to-1 spending ratio may be less dramatic than it appears.

The talent crisis

The most striking finding may be about people rather than models. The number of AI scholars moving to the United States has dropped 89% since 2017, with 80% of that decline occurring in the last year alone. The report describes the fall as “precipitous.” Switzerland now ranks first in the world for AI researchers and developers per capita.

The talent migration data complicates the narrative that American AI leadership is secure because of its investment advantage. If the researchers who build frontier models are increasingly choosing not to come to the US, the spending premium buys hardware and infrastructure but not the intellectual capital that turns compute into capability. DeepSeek demonstrated in January 2025 that a Chinese lab could match Silicon Valley's best with a fraction of the resources. The talent data suggests the conditions that produced DeepSeek are strengthening, not weakening.

What AI can and cannot do

The report documents performance gains that would have seemed implausible two years ago. On SWE-bench, a coding benchmark, model performance rose from 60% to near 100% in a single year. On graduate-level science questions, model accuracy hit 93%, above the expert human validator baseline of 81.2%. Google's Gemini Deep Think won a gold medal at the International Mathematical Olympiad. On Humanity's Last Exam, a benchmark designed to be unsolvable, frontier models gained 30 percentage points in a year.

But the report also documents what it calls a “jagged frontier.” The top model reads analog clocks correctly only 50.1% of the time. Robotic manipulation systems achieve 89.4% success in simulation but only 12% in real household tasks. Nearly half of the 500-plus clinical AI studies reviewed used exam-style questions rather than real patient data, and only 5% used actual clinical records. The gap between benchmark performance and real-world reliability remains wide in domains where errors have consequences.

Adoption, trust, and regulation

Generative AI reached 53% population adoption within three years of launch, faster than the personal computer or the internet. Eighty-eight per cent of organisations report using AI. Four in five university students now use generative AI tools. But the US ranks 24th globally in adoption at just 28.3%, behind Singapore at 61% and the UAE at 54%.

Public trust is lower still. Only 31% of Americans trust their government to regulate AI, the lowest figure of any country surveyed and well below the global average of 54%. The expert-public disconnect is a central theme of the report: 73% of AI experts expect a positive impact on jobs, compared with 23% of the general public. Only a third of Americans expect AI to make their jobs better.

Forty-seven countries now have active AI legislation, but only 12 have enforcement mechanisms. Documented enforcement actions rose from 43 in 2024 to 156 in 2025. Compliance costs vary eightfold between jurisdictions. The EU AI Act entered full enforcement in January 2026, but the broader regulatory picture is one of fragmentation rather than coordination.

The environmental cost

Training xAI's Grok 4 produced 72,816 tonnes of CO2 equivalent, roughly the emissions of driving 17,000 cars for a year. AI data centre power capacity reached 29.6 gigawatts globally, enough to power New York State at peak demand. The environmental section of the report reads as a counterweight to the performance gains: the models are getting better, but the cost of making them better is scaling alongside the capabilities.

What the numbers mean

The headline finding, that China has nearly closed the performance gap with the US, will dominate the policy conversation. But the report's deeper implication is about the relationship between spending and outcomes. The United States invested $285.9 billion in private AI capital last year. China invested $12.4 billion. The performance gap between their best models is 2.7%. Meanwhile, AI talent migration to the US has collapsed, China dominates patents and publications, and Chinese infrastructure investment in energy and manufacturing dwarfs America's.

The open-versus-closed source debate adds another dimension. The top closed model now leads the top open model by 3.3%, up from 0.5% in August 2024, and six of the top ten Arena models are closed-source. The performance advantage of proprietary systems is widening, which favours the American companies that dominate the closed-source tier but also means the open-source models that have driven China's catch-up may face diminishing returns.

Employment data for software developers aged 22 to 25 fell nearly 20% since 2022. One-third of surveyed organisations expect AI to reduce their workforce in the coming year. The Foundation Model Transparency Index dropped from 58 to 40, with most frontier models reporting nothing on fairness, security, or human agency. Documented AI incidents rose 55% in a year.

The Stanford AI Index does not make policy recommendations. It presents data. But the data in the 2026 edition tells a story that should unsettle anyone who assumes American AI dominance is durable. The US leads on investment and model performance. China leads on talent pipeline, patents, publications, robotics, and energy infrastructure. The performance gap is 2.7% and shrinking. The spending gap is 23 to 1 and growing. One of those trends is sustainable. The report leaves it to the reader to decide which one.